The neuron is the fundamental unit of the brain and neural system. Like a bridge connecting the external world to the human body, neurons guide sensory inputs, like pain or temperature, and send commands to our muscles, allowing us to act in response to such inputs.

A very particular structure and physiology make such functions easier. Neurons resemble trees (Figure 1): dendrites branch out of the trunk, the soma, which in turn is anchored to a root-resembling network, called the axon. This long and thin structure generates and processes signals that travel down through its core and prompts the release of neurotransmitters, small chemicals that can act as excitatory or inhibitory molecules.

When these “go” or “stop” signals leave the axons, dendrites receive the message and determine if the neuron will forward this information. At the termination of a dendrite, in a leaf-like structure called the spine, neurons establish contact with each other.

Depending on their role and location, neurons may have different sizes, shapes, and structures: some enable us to feel, smell and see the outside world, others allow us to move in it. The most common type, however, are the interneurons, which form complex circuits that help you react to external stimuli.

Neurons have essentially three functions: receiving information, processing it, and passing it to target neurons after analysis. Unlike other cells, they do not reproduce or regenerate in our lifetime, so when one neuron dies, no other substitutes it.

[h5p id=”7″]

Neural communication – spike trains

The human body is controlled by the central nervous system, a network of nerve cells, or fibers, which connect the brain, spinal cord, and the rest of the body. Neurophysiologists Edgar Douglas Adrian and Yngve Zotterman have shown that sense organs respond to changes in their environment by sending messages, in the form of electrical signals, to the central nervous system. They found that information transmitted between neurons is represented by the so-called spike trains, high-intensity electrical impulses in the brain1 (Figure 2).

Spike trains can be represented as binary signals, where 1 represents the signal, and 0 a lack of signal. The pattern of spikes encodes data about sensing, like visual pattern recognition, hearing of the sound, smelling of aroma, and taste.

A second, non-digital, possibility asserts that neurons communicate through a specialized intercellular connection called gap junctions, which enable direct cell-cell transfer of ions and small molecules. According to this assumption, the information transmission between neurons occurs thanks to the electrical coupling through gap junctions2,3.

Neural communication – chemical synapses

Neurons communicate with each other and with other cells via “chemical synapses” (Figure 1), the communication points in which the presynaptic (sending) neuron transmits the message to the postsynaptic (receiving) neuron4.

Inside the presynaptic neuron, round vesicles are filled with neurotransmitters. When the sending nerve cell transmits information to receiving cells, the neurotransmitters are released from their vesicles, flow through the gaps between neurons and reach the receptors in the postsynaptic dendrite. This bound is highly specific since each neurotransmitter only connects to a particular kind of receptor.

Neural language – brain code

If we want to understand the highly specified information-processing machine that is the brain, we need to understand the language of neurons: the neural code. The sequence of spikes trains is processed with a coding way, in which single neurons carry the information. In an individual neuron, the information seems to be coded in an ordered string of spikes, which are the essential elements of the neuronal language.

Spikes are like unpredictable electrical signals or storms of electrical impulses. The electrical signal from each neuron has different shapes and forms. But, while we already know quite a lot about the mechanism of the spike generation, the structure of the neural code remains a huge mystery5. Is the information hidden in individual spikes, their structure, and arrival time or in additional data contained in spikes’ precise timing? Everything is based on the enigmatic code which scientists try to solve. And what about candidates to neural code?

Candidates to Neural Code

Rate code was first discovered in 1926 by Adrian and Zotterman1, who studied the nerve fibers’ response to changes using neurons in the neck muscles of frogs. They found that impulses, which convey the information about stimuli, travel along the nerve fibers and are sent out to arouse contraction in the muscles.

Adrian and Zotterman’s experiment showed how a single nerve cell coded sensory information. Based on these findings, scientists hypothesized that neurons code their information in the neuronal Firing Rate, which is the average ratio of spikes in a strictly defined period. If the intensity of the stimulus increases, the Firing Rates increase as well. Figure 3 shows how we can measure the brain’s electrical activity.

Temporal coding assumes that the information is carried out by a precise spike timing or high-frequency Firing Rate fluctuations6. It builds a temporal relationship between the output firing patterns and the inputs of the nervous system. This type of neural coding suggests that high-frequency fluctuations of neural Firing Rates are encoded information.

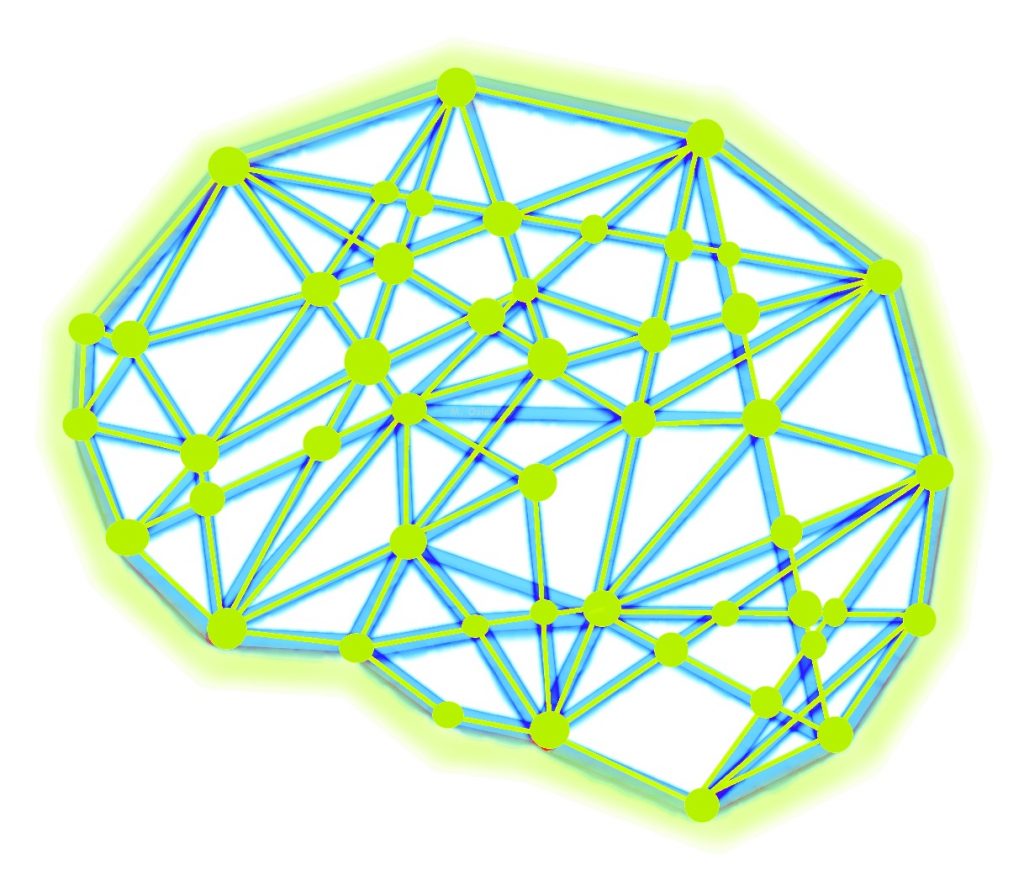

Population coding assumes that populations or clusters of nervous cells, rather than single neurons, encode the messages7,8. This theory is based on the assumption that stimuli are represented by the sum of activities of a group of neurons; when these sums are combined, we obtain the information about the inputs, the responses from these sums can be combined. Recently, plenty of experiments based on electrodes implanted into a rat’s damaged brain are performed to optimize the brain signals’ encoding (Figure 4)9.

The question of which code the brain uses has been revolving in the minds of researchers for many years. The more recent considerations on the subject show that the brain switches from rate to temporal coding and vice versa10.

Attempts to map nature

To understand how information is processed, we need to model the nervous system, at different structural scales, including the biophysical, the circuits, and the system levels. Biologically-realistic large-scale brain models require a huge number of neurons and connections between them. Brain-machine interests (BMI) have proven to be a helping hand in this task. These technological mediums connect the brain to external devices that can be controlled with the help of the mind, or the mind itself, without using muscles.

An interesting BMI example was the research conducted by Miguel Nicoleli’ team11. They taught monkeys how to use a simple computer game, either with their hands or their neuronal activity. The experiment showed adaptations of some neurons to map behaviorally significant parameters movement.

Recently, at The Geneva’s Science and Technology University, EPFL, professor Henry Markram and his team led the multi-million EU project called “Blue Brain Project”, which aims to build digital reconstructions of the human brain12. Until now, Markram’s team generated statistical cases representing 10 million neurons and modeled the web with 88 billion synaptic connections.

When we understand the neural code, we will probably be able to solve the puzzle brain perception, action, thought, and consciousness. It will be possible to create effective brain-machine interfaces enabling control wheelchairs, prosthetic limbs, and even video games.. Let’s see who cracks the code first.

This article was the joint work of Agnieszka Pregowska (Institute of Fundamental Technological Research Polish Academy of Sciences), Magdalena Garlińska (National Center for Research and

Development), and Magdalena Osial (University of Warsaw).

References

[1] Adrian E. D., Zotterman, Y. The impulses produced by sensory nerve endings, The Journal of Physiology 61, 49–72, 1926.

[2] Roy K., Kumar S., Bloomfield S. A., Gap junctional coupling between retinal amacrine and ganglion cells underlies coherent activity integral to global object perception, Proceedings of the National Academy of Sciences of the United States of America 114(38), E10484–E10493, 2013.

[3] Song J., Ampatzis K., Bjornfors R., El Manira A., Motor neurons control locomotor circuit function retrogradely via gap junctions, Nature 529, 399–402, 2016.

[4] Colonnier M. Synaptic pattern on different cell types in the different laminae of the cat visual cortex. An electron microscope study. Brain Research 9(2),268–87, 1968.

[5] J. L. van Hemmen and T. Sejnowski, 23 Problems in Systems Neurosciences, Oxford University Press, Oxford, 2006.

[6] Rieke F, Warland D, de Ruyter van Steveninck R, Bialek W. Spikes: Exploring the Neural Code. Cambridge, Massachusetts: The MIT Press, 1999.

[7] Onken A., Chamanthi P. P., Karunasekara R., Kayser C., Panzeri S. Understanding Neural Population Coding: InformationTheoretic Insights from the Auditory System, Advances in Neuroscience 14, 907851, 2014.

[8] Pouget A., Dayan P., Zemel R. Information processing with population codes, Nature Reviews Neuroscience 1(2), 125–132, 2000.

[9] Szczepanski J., Amigó J.M., Wajnryb E., Sanchez-Vives M.V. Application of Lempel–Ziv complexity to the analysis of neural discharges, Network: Computation in Neural Systems 2003, 14(2), .335–350.

[10] Pregowska A., Casti A., Kaplan E., Wajnryb E., Szczepanski J., Information processing in the LGN: a comparison of neural codes and cell types, Biological Cybernetics 113(4), 453–464, 2019.

[11] Miranda, E.R., Brouse, A., Toward Direct Brain-Computer Musical Interfaces. Proceedings of the 5th International Conference on New Instruments for Musical Expression. NIME ’05. Vancouver, Canada: National University of Singapore: 216–219, 2005.

[12] https://www.epfl.ch/research/domains/bluebrain/blue-brain/about